What came to your mind when you read “hash functions” in the title?

If you’re pragmatic, you probably remembered SHA-256 or MD5. Those are cryptographic hash functions, and they work fast and well for arbitrary inputs, even if they are supplied by malicious actors. Or at least they’re designed to.

That’s the exact opposite of what I want to talk about. I want to talk about the cheapest, stupidest, most ridiculous hash functions you can get away with.

As good a conversation starter as any. Here’s what the core of rapidhash looks like:

hash = 0 # a 64-bit variable

seed = ... # a hidden constant

while still has data:

# read the next 128 bits (16 bytes) of input

a, b = next_64_bits(), next_64_bits()

# compute this weird formula, producing a 128-bit output

product = (a ^ hash) * (b ^ seed)

# XOR the two 64-bit halves together

hash = (product % 2**64) ^ (product >> 64)

return hash

I’d rate this hash function AAA, for the scream you might witness if you show this to a cryptographer. Suffice to say, we don’t have any estimate on how cooked you are if you use this in a critical service. If you’re processing user input, it’s possible (or at least not known to be hard) to generate many inputs that produce the same hash, overloading the hash table and causing your application to grind to a halt.

But what if you don’t have adversaries? What if you’re processing vetted song lyrics, or maybe writing a compiler? Now we’re talking: rapidhash is clearly bloat, we don’t want those pesky XORs and multiplications, and we can find something cheaper.

Like addition! Here’s your hash:

hash = 0

while still has data:

hash = (hash + next_32_bits()) % 2**32

return hash

It reliably reacts to shifts, removals, and doesn’t use the same hash for similar strings. It doesn’t recognize reordering, but maybe it’s not a problem on your data! Or maybe it is, but then you just add a rotate to make the operation non-commutative:

hash = 0

while still has data:

hash = ((hash << 3) % 2**32) | (hash >> 29)

hash = (hash + next_32_bits()) % 2**32

return hash

Now that I think about it, not all bytes need to contribute to the hash. We could sample words randomly or skip every second word. Save electricity!

It’s beautiful, it’s clever, it’s art – the three vital characteristics of a hash function.

Well, look at it like this: while I wouldn’t use this in production without a paper trail, it doesn’t mean it’s right to ignore the option altogether.

For one thing, maybe you’re working with static data or can otherwise verify you’re not vulnerable to HashDoS. Maybe you’re doing retrocomputing and need something extremely cheap. Or maybe you are hard-pressed to produce a hash within

Stripping hashes to the bare minimum also shows how expensive hash tables usually are. I’ve seen JS projects using `${x},${y}` as a map key instead of nesting arrays, and I always wonder if we’d need to optimize tables if people used the right data structure. Maybe you’ll become a responsible programmer after reading this, who knows!

So what makes a hash “good”? Here’s a quick refresher on hash tables:

Say we want a hashmap to store

entries. To do this, make an array of buckets, each of which is in turn an array of entries. Each entry is pushed to bucket . To access the value by key, iterate over the key’s bucket until you find the key you searched for. If the hash is “good enough”, entries will be distributed roughly equally and buckets will be small, so iteration will be fast.

The remainder is used because we can’t allocate all

Many functions only satisfy the first requirement. For example, if you’re hashing a

Let’s take a look at a few examples. We’ll start with paragraphs from this blog as a known-good dataset and the addition-based hash.

print("total strings:", len(strings))

print("average length:", sum(map(len, strings)) / len(strings))

print("example string:", random.choice(strings))

total strings: 3579

average length: 217.69935736239174

example string: `next_f32` is implemented by generating $24$ random bits and dividing them by $2^{24}$. Such a division is exact, and casting `f32` to `f64` is always exact as well, so the last line of `is_bedrock` is equivalent to:

The most basic characteristic is bit probabilities. In an ideal world,

stats = [0] * 32

for string in strings:

h = addition_hash(string)

for bit in range(32):

stats[bit] += (h >> bit) & 1

for bit in range(32):

print(round(stats[bit] / len(strings) * 100) - 50, "%", sep="", end="\t")

The actual probabilities are within

This is further confirmed by bit correlation, which measures the repetitiveness of information stored in different bits.

correlation = [[0] * 32 for bit1 in range(32)]

for string in strings:

h = addition_hash(string)

for bit1 in range(32):

for bit2 in range(bit1):

correlation[bit1][bit2] += ((h >> bit1) & 1) == ((h >> bit2) & 1)

for bit1 in range(32):

for bit2 in range(bit1 + 1, 32):

correlation[bit1][bit2] = correlation[bit2][bit1]

for bit1 in range(32):

for bit2 in range(32):

if bit1 == bit2:

cell = ""

else:

cell = str(round(correlation[bit1][bit2] / len(strings) * 200) - 100)

print(cell, end="\t")

print()

The heatmap is mostly well-behaved, with a lone

# There are 3579 strings, which is about 2^12, so let's allocate a hash table that large.

hash_table = [0] * 4096

collisions = 0

for string in strings:

bucket = addition_hash(string) & 4095

collisions += hash_table[bucket]

hash_table[bucket] += 1

print("collisions:", collisions)

collisions: 1643

For comparison, SHA-256 produces

If the input gets shorter or more well-structured, though, cracks begin to appear.

Say we’re hashing domain names. I’ll use top 5000 domains according to Cloudflare.

total strings: 5000

average length: 12.0444

example string: thawte.com

Since high bits of ASCII letters are always fixed, there are island of predictability every 8 bits, four in total. This significantly increases the collision rate:

Adding rotation to the mix only partially improves the situation: while bits become more random, the correlation never goes away. Perhaps that’s the wrong approach: the addition hash has zero collisions on the full

Sometimes the best way to improve entropy is to run a few more rounds of the core algorithm, e.g. by virtually appending null bytes to the input. For instance, rapidhash does something like that.

But more often, the finalization round has completely different properties, because its purpose is to make the hash easier to consume for hash tables, not to reduce collisions per se. In many cases, this transform is designed to be one-to-one.

Multiplication is a very efficient way to spread entropy across multiple bits:

a = 0x5c57fb3fbdb59af7

b = 0xf95b4f985f327714

hex((a * b) % 2**64) # 0xd9f6efcc2a76ec4c

a ^= 1 << 17 # flip one bit

hex((a * b) % 2**64) # 0x7927ae31189eec4c

Suppose

a = 0x5c57fb3fbdb59af7

b = 0xf95b4f985f327714

hex(a * b) # 0x59f2835d6c4ac70bd9f6efcc2a76ec4c

a ^= 1 << 17 # flip one bit

hex(a * b) # 0x59f2835d6c4cb9c27927ae31189eec4c

In this example, flipping the

old: 0x59f2835d6c4 ac70bd9f6efcc2a76 ec4c

new: 0x59f2835d6c4 cb9c27927ae31189e ec4c

So by XORing the two halves of the full

a = 0x5c57fb3fbdb59af7

b = 0xf95b4f985f327714

hex(((a * b) % 2**64) ^ ((a * b) >> 64)) # 0x80046c91463c2b47

a ^= 1 << 17 # flip one bit

hex(((a * b) % 2**64) ^ ((a * b) >> 64)) # 0x20d52d6c74d2558e

This operation is called folded multiplication, and it’s the core of foldhash and some other non-cryptographic hash functions. Applying this after the addition hash yields only

Technically, this hash still isn’t great. We’d like bit flips to affect the output completely randomly, flipping each output bit with a

Remember how flipping a bit affects about

The main contributing factor to the output bit flip is the carry-in. It happens if

b = 0xf95b4f985f327714

max_error = 0

for i in range(1, 65):

max_error = max(max_error, b % 2 ** i / 2 ** i - 0.5)

print(max_error) # goal: 0, max: 0.5

0.47491029649972916

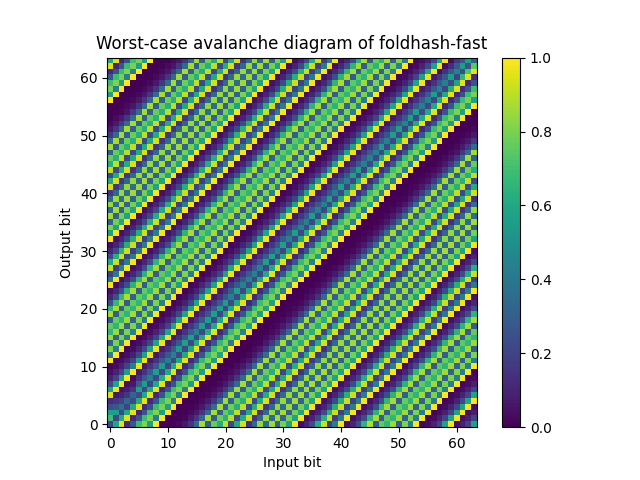

The foldhash README neatly visualizes this failure:

Since a single foldmul doesn’t guarantee a good reaction to bit flips, foldhash chains two foldmuls in hopes that distributing entropy twice does so more evenly, and that seems to work, though we don’t really know why:

If this section was confusing, don’t worry, I just wanted to yap about how complex hashes used in the wild are, because that isn’t really necessary.

For one thing, if you’re using a separate chaining hash table, that’s an overkill. Its design requires the 0x00 and 0x80 in different buckets, as long as the hash table has at least

Now imagine a world where people realized that early and designed APIs accordingly, because that’s not the world we live in.

Recall that multiplication can easily move entropy upward, but we need to move it downward. We can steal the workaround from foldmul: compute a wide product with a constant and take its high half. That produces high-quality results, and provably so. That’s great, except it’s completely unnecessary – had hash tables used the few top bits of the hash as the bucket index instead of the few bottom bits, we could use a normal truncated product, which is faster to compute on most hardware.

Granted, that only works reliably for separate chaining, and not open addressing. But SwissTable keeps

Since HashBrown requires bottom bits, rustc-hash uses rotate_left to move entropy downward, which is imperfect, but it’s the fastest way to do so (byte swapping, CRC, and pext are all slower). Abseil uses low bits too, and Folly gives you a choice between using low bits or automatically mixing bits with CRC or ad-hoc foldmul.

Here’s your recipe for a simple hash function:

crc32 instruction, or maybe try bswap.Would you like me to provide a code example?

I probably had more fun writing this section than you'll have reading it. Sorry if you groaned. If you found this paragraph by accident, let me know how, I'd love to know if you're using w3m or something.